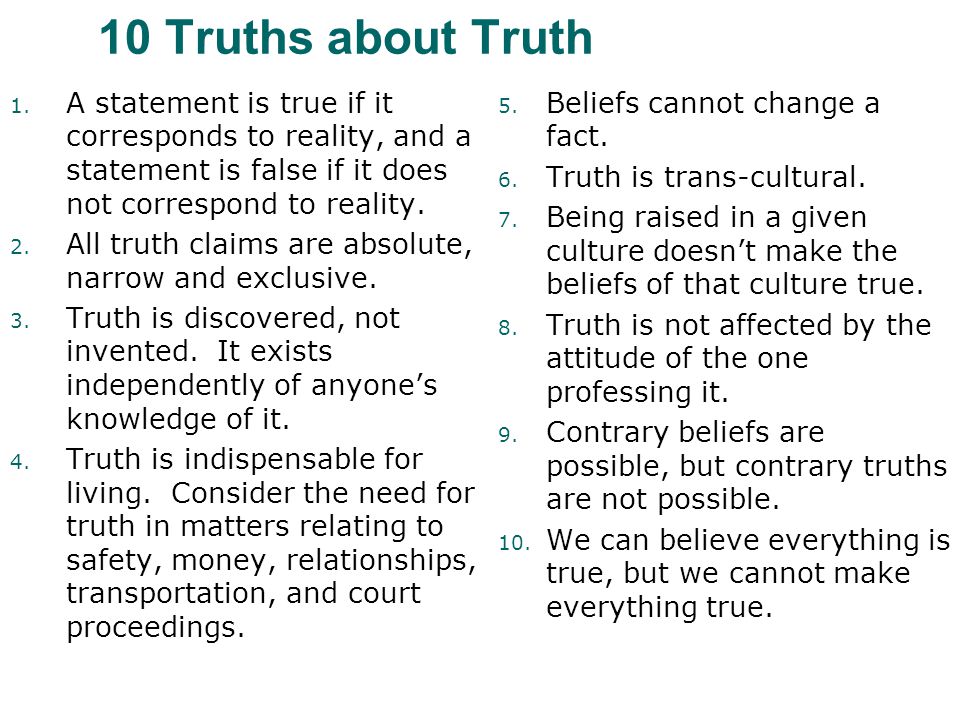

First, we need to be clear on precisely what “truth” is. The best definition I’ve found is comprised of the “10 Truths about Truth,” by philosophy professor Christopher Ullman…

Finding the truth, however, is rarely straightforward. That’s why it’s important to: 1. Develop and foster a critical thinking mindset and 2. Use ‘clear thinking tools’* to help in your search for truth.

*Done correctly, the right mindset — or right approach — to thinking about something is really all you need, no specific “thinking tools” necessary. But since we’re human and thus prone to fatigue, forgetfulness, and emotions (including our own prejudicial biases), it’s helpful to have some ‘thinking tools’ at our side to compensate for these unavoidable human weaknesses. It’s the reason pilots need checklists.

The Critical Thinking Mindset

In the Introduction, we briefly covered “The Scientific Method” and how it helped revolutionize problem-solving in science. Its success taught us the necessity to keep an open mind for better ways to explain things. It’s part of a critical thinking mindset.

The only way you can be sure to find the truth amongst competing viewpoints — whether you’re debating someone in person or searching for the truth online — is to be able to happily and confidently claim the seven clear thinking traits shown below:

- I recognize the difference between facts and opinions.

- I evaluate evidence to decide if an opinion is reasonable.

- I willingly change my mind if evidence shows my opinion may be incorrect.

- I look at a problem from different angles.

- I ask relevant and probing questions.

- I recognize preconceptions, bias, and values in myself and others.

- I question the logic of my own beliefs and opinions.

“If you want to assert a truth, first make sure it’s not just an opinion that you desperately want to be true.” – Neil DeGrasse Tyson

Critical Thinking Tools

From (certain parts of) Philosophy

Much of philosophy is concerned with how to think and communicate properly. Since some areas can be described as ‘thinking about thinking,’ IMO there’s a risk of ‘over-thinking.’

Happily, however, there are at least three wonderful sources of ‘clear thinking tools’ that don’t require anymore than ‘good ‘ole horse sense’ to understand and appreciate. We’ll briefly cover these sources and their tools in the following order:

- Carl Sagan’s “Baloney Detection Kit.”

- Dan Dennett and “Occam’s Broom.”

- Michael Shermer on “Conspiracy Theories.”

“Fake News” can be debunked using a critical thinking mindset and many of the same “clear thinking tools” we’ll cover here, but has some unique aspects covered in the sub-section of this chapter, titled “Fake News and Conspiracy ‘Theories.'”

The tools described by the above three ‘heroes of critical thinking’ are especially valuable in today’s world of social media, where it can be otherwise impossible to differentiate credible news and information from intentionally fake or innocently erroneous.

The tools come in two general categories. Some overlap but all are useful in the search for truth:

- Tools for crafting your own intellectually fair, cohesive “reasoned argument”

- Tools for recognizing a fallacious, intellectually unfair argument

One example of these ‘thinking tools’ you may already be familiar: “Occam’s Razor,” named after 14th century Franciscan monk, William Ockham. (Spelling is different but refers to same person.) It’s a rule of thumb that says if two or more solutions adequately explain something, usually the simplest one — the one that makes the fewest assumptions — is the correct one. (If a more complex answer better explains something, then the more complex one is likely correct.)

Example: Person clearly remembers being taken from bed at night up into alien spacecraft to be examined before depositing person back into bed.

Which explanation is more likely?

1. Person was actually taken from bed up into hovering alien spacecraft, examined, and placed back into bed.

2. Person experienced a lucid dream.

Ans: #2 is more likely, because it makes fewer assumptions. (Doesn’t prove either answer but suggests what is more likely.)

An example of a thinking tool for recognizing a fallacious argument you’re likely familiar: Rhetorical questions (a.k.a. “loaded questions”). These assume the premise of a question is true — even though to assume it’s true would be absurd. It gets its impact from its first read, similar to a billboard sign with a witty advert. In fact, some loaded questions can appear thoughtful, even brilliant. Take this one…

“If brains are computers, who designs the software?”

…As you see in the above rhetorical question, two major assumptions must be true before an answer could be cobbled together.

Now on to the three authors and their tools:

The first source comes from one of America’s most prolific scientists who was also one of science’s best philosophical thinkers — Carl Sagan.

Carl Sagan’s “Baloney Detection Kit”

In his timeless, eye-opening 1995 book, “The Demon Haunted World,” Sagan compiles a list of nine “tools for skeptical thinking,” followed by twenty ‘sneaky philosophical tricks’ (my words) to look out for. (Professional philosophers have identified many other more nuanced fallacious argument techniques, but Sagan’s list identifies the main ones with his knack for clarity.)

Carl Sagan’s words:

“What skeptical thinking boils down to is the means to construct, and to understand, a reasoned argument and—especially important—to recognize a fallacious or fraudulent argument. The question is not whether we like the conclusion that emerges out of a train of reasoning, but whether the conclusion follows from the premise or starting point and whether that premise is true.

Among the tools: (bolding is mine):

-

-

- Wherever possible there must be independent confirmation of the “facts.”

-

-

-

- Encourage substantive debate on the evidence by knowledgeable proponents of all points of view.

-

-

-

- Arguments from authority carry little weight — “authorities” have made mistakes in the past. They will do so again in the future. Perhaps a better way to say it is that in science there are no authorities; at most, there are experts.

-

-

-

- Spin more than one hypothesis. If there’s something to be explained, think of all the different ways in which it could be explained. Then think of tests by which you might systematically disprove each of the alternatives. What survives, the hypothesis that resists disproof in this Darwinian selection among “multiple working hypotheses,” has a much better chance of being the right answer than if you had simply run with the first idea that caught your fancy.

-

-

-

- Quantify. If whatever it is you’re explaining has some measure, some numerical quantity attached to it, you’ll be much better able to discriminate among competing hypotheses. What is vague and qualitative is open to many explanations. Of course there are truths to be sought in the many qualitative issues we are obliged to confront, but finding them is more challenging.

-

-

-

- If there’s a chain of argument, every link in the chain must work (including the premise) — not just most of them.

-

-

-

- Occam’s Razor. This convenient rule-of-thumb urges us when faced with two hypotheses that explain the data equally well to choose the simpler.

-

-

-

- Always ask whether the hypothesis can be, at least in principle, falsified. Propositions that are untestable, unfalsifiable are not worth much. Consider the grand idea that our Universe and everything in it is just an elementary particle — an electron, say — in a much bigger Cosmos. But if we can never acquire information from outside our Universe, is not the idea incapable of disproof? You must be able to check assertions out. Inveterate skeptics must be given the chance to follow your reasoning, to duplicate your experiments and see if they get the same result.

-

-

-

- Try not to get overly attached to a hypothesis just because it’s yours. It’s only a way station in the pursuit of knowledge. Ask yourself why you like the idea. Compare it fairly with the alternatives. See if you can find reasons for rejecting it. If you don’t, others will.*

-

* This is a problem that affects jury trials. Retrospective studies show that some jurors make up their minds very early— perhaps during opening arguments—and then retain the evidence that seems to support their initial impressions and reject the contrary evidence. The method of alternative working hypotheses is not running in their heads.” — Carl Sagan

Sagan continues with his list of twenty fallacious argument techniques:

“In addition to teaching us what to do when evaluating a claim to knowledge, any good baloney detection kit must also teach us what not to do. It helps us recognize the most common and perilous fallacies of logic and rhetoric. Many good examples can be found in religion and politics, because their practitioners are so often obliged to justify two contradictory propositions.

1. Ad hominen — Latin for “to the man,” attacking the arguer and not the argument (e.g., The Reverend Dr. Smith is a known Biblical fundamentalist, so her objections to evolution need not be taken seriously)

2. Argument from authority (e.g., President Richard Nixon should be re-elected because he has a secret plan to end the war in Southeast Asia — but because it was secret, there was no way for the electorate to evaluate it on its merits; the argument amounted to trusting him because he was President: a mistake, as it turned out)

3. Argument from adverse consequences (e.g., A God meting out punishment and reward must exist, because if He didn’t, society would be much more lawless and dangerous — perhaps even ungovernable. Or: The defendant in a widely publicized murder trial must be found guilty; otherwise, it will be an encouragement for other men to murder their wives)

4. Appeal to ignorance — the claim that whatever has not been proved false must be true, and vice versa (e.g., There is no compelling evidence that UFOs are not visiting the Earth; therefore UFOs exist — and there is intelligent life elsewhere in the Universe. Or: There may be seventy kazillion other worlds, but not one is known to have the moral advancement of the Earth, so we’re still central to the Universe.) This impatience with ambiguity can be criticized in the phrase: absence of evidence is not evidence of absence.

5. Special pleading, often to rescue a proposition in deep rhetorical trouble (e.g., How can a merciful God condemn future generations to torment because, against orders, one woman induced one man to eat an apple? Special plead: you don’t understand the subtle Doctrine of Free Will. Or: How can there be an equally godlike Father, Son, and Holy Ghost in the same Person? Special plead: You don’t understand the Divine Mystery of the Trinity. Or: How could God permit the followers of Judaism, Christianity, and Islam — each in their own way enjoined to heroic measures of loving kindness and compassion — to have perpetrated so much cruelty for so long? Special plead: You don’t understand Free Will again. And anyway, God moves in mysterious ways.)

6. Begging the question, also called assuming the answer (e.g., We must institute the death penalty to discourage violent crime. But does the violent crime rate in fact fall when the death penalty is imposed? Or: The stock market fell yesterday because of a technical adjustment and profit-taking by investors — but is there any independent evidence for the causal role of “adjustment” and profit-taking; have we learned anything at all from this purported explanation?)

7. Observational selection, also called the enumeration of favorable circumstances, or as the philosopher Francis Bacon described it, counting the hits and forgetting the misses (e.g., A state boasts of the Presidents it has produced, but is silent on its serial killers)

8. Statistics of small numbers — a close relative of observational selection (e.g., “They say 1 out of every 5 people is Chinese. How is this possible? I know hundreds of people, and none of them is Chinese. Yours truly.” Or: “I’ve thrown three sevens in a row. Tonight I can’t lose.”)

9. Misunderstanding of the nature of statistics (e.g., President Dwight Eisenhower expressing astonishment and alarm on discovering that fully half of all Americans have below average intelligence);

10. Inconsistency (e.g., Prudently plan for the worst of which a potential military adversary is capable, but thriftily ignore scientific projections on environmental dangers because they’re not “proved.” Or: Consider it reasonable for the Universe to continue to exist forever into the future, but judge absurd the possibility that it has infinite duration into the past);

11. Non sequitur — Latin for “It doesn’t follow” (e.g., Our nation will prevail because God is great. But nearly every nation pretends this to be true; the German formulation was “Gott mit uns”). Often those falling into the non sequitur fallacy have simply failed to recognize alternative possibilities;

12. Post hoc, ergo propter hoc — Latin for “It happened after, so it was caused by” (e.g., the Archbishop of Manila, Jaime Sin: “I know of … a 26-year-old who looks 60 because she takes [contraceptive] pills.” Or: Before women got the vote, there were no nuclear weapons)

13. Meaningless question (e.g., What happens when an irresistible force meets an immovable object? But if there is such a thing as an irresistible force there can be no immovable objects, and vice versa)

14. Excluded middle, or false dichotomy — considering only the two extremes in a continuum of intermediate possibilities (e.g., “Sure, take his side; my husband’s perfect; I’m always wrong.” Or: “Either you love your country or you hate it.” Or: “If you’re not part of the solution, you’re part of the problem”)

15. Short-term vs. long-term — a subset of the excluded middle, but so important I’ve pulled it out for special attention (e.g., We can’t afford programs to feed malnourished children and educate pre-school kids. We need to urgently deal with crime on the streets. Or: Why explore space or pursue fundamental science when we have so huge a budget deficit?);

16. Slippery slope, related to excluded middle (e.g., If we allow abortion in the first weeks of pregnancy, it will be impossible to prevent the killing of a full-term infant. Or, conversely: If the state prohibits abortion even in the ninth month, it will soon be telling us what to do with our bodies around the time of conception);

17. Confusion of correlation and causation (e.g., A survey shows that more college graduates are homosexual than those with lesser education; therefore education makes people gay. Or: Andean earthquakes are correlated with closest approaches of the planet Uranus; therefore — despite the absence of any such correlation for the nearer, more massive planet Jupiter — the latter causes the former)

18. Straw man — caricaturing a position to make it easier to attack (e.g., Scientists suppose that living things simply fell together by chance — a formulation that willfully ignores the central Darwinian insight, that Nature ratchets up by saving what works and discarding what doesn’t. Or — this is also a short-term/long-term fallacy — environmentalists care more for snail darters and spotted owls than they do for people)

19. Suppressed evidence, or half-truths (e.g., An amazingly accurate and widely quoted “prophecy” of the assassination attempt on President Reagan is shown on television; but — an important detail — was it recorded before or after the event? Or: These government abuses demand revolution, even if you can’t make an omelette without breaking some eggs. Yes, but is this likely to be a revolution in which far more people are killed than under the previous regime? What does the experience of other revolutions suggest? Are all revolutions against oppressive regimes desirable and in the interests of the people?)

20. Weasel words (e.g., The separation of powers of the U.S. Constitution specifies that the United States may not conduct a war without a declaration by Congress. On the other hand, Presidents are given control of foreign policy and the conduct of wars, which are potentially powerful tools for getting themselves re-elected. Presidents of either political party may therefore be tempted to arrange wars while waving the flag and calling the wars something else — “police actions,” “armed incursions,” “protective reaction strikes,” “pacification,” “safeguarding American interests,” and a wide variety of “operations,” such as “Operation Just Cause.” Euphemisms for war are one of a broad class of reinventions of language for political purposes. Talleyrand said, “An important art of politicians is to find new names for institutions which under old names have become odious to the public”)” – Carl Sagan

Sagan ends this chapter of “The Demon Haunted World” with a disclaimer:

“Like all tools, the baloney detection kit can be misused, applied out of context, or even employed as a rote alternative to thinking. But applied judiciously, it can make all the difference in the world — not least in evaluating our own arguments before we present them to others.” – Carl Sagan

Note: Earlier I’d referred to Sagan’s list of twenty fallacious argument techniques as “sneaky philosophical tricks,” but likely most people are unaware they’re using them. You might even recognize some you’ve used yourself. That’s because if we have an emotional investment in a topic we’re arguing, we tend to ‘cut to the chase,’ short-cutting a reasoned argument — so convinced we are that the truth of our position is self-evident.

Maria Popova, author of BrainPickings.org, created a great downloadable pdf of the “Baloney Detection Kit,” edited for clarity. It’s a fantastic version, IMO.

Dennet’s description of

“Occam’s Broom”

The second source is from a more recent (2013) book by the eminent philosopher Daniel C. Dennett, “Intuition Pumps and Other Tools for Thinking” in which Dennett distills down twelve ‘everyday’ thinking tools — right at the start of the book. To my knowledge, there has never before been a book like it. (There’s a total of seventy ‘thinking tools’ in the book for the more determined reader.)

Dennett’s book and his thinking tools are a great read, highly recommended, but are philosophically more involved than Sagan’s. (After all, Daniel Dennett is a professional philosopher, unlike Sagan.) So I’ll leave it as recommended reading.

There is, however, one of Dennett’s “…Dozen General Thinking Tools” that deserves coverage here because it is so widespread and can be extremely insidious, fooling even die-hard critical thinkers. It gets its power from its invisibility…

Occam’s Broom

Named after Occam’s Razor, but this one was coined more recently by molecular biologist Sydney Brenner. It refers to ‘sweeping inconvenient facts under the rug’ and is used to great effect all the time. Like rhetorical questions, it’s sometimes employed as an intellectually dishonest ploy to get people to accept a ‘pet viewpoint’ as valid.

It’s particularly effective against people who are ignorant of the facts surrounding a viewpoint and is the main underhanded philosophical tool (‘weapon’) used by conspiracy theorists and propagandists. This quote about Occam’s Broom from Dennett’s book explains it nicely:

“The practice is particularly insidious when used by propagandists who direct their efforts at the lay public, because like Sherlock Holmes’s famous clue about the dog that didn’t bark in the night, the absence of a fact that has been swept off the scene by Occam’s Broom is unnoticeable except by experts. For instance, creationists invariably leave out a wealth of embarrassing evidence that their “theories” can’t handle, and to a nonbiologist their carefully-crafted accounts can be quite convincing simply because the lay reader can’t see what isn’t there.

How on earth can you keep on the lookout for something invisible?” – Daniel Dennett

Michael Shermer – on Conspiracy Theories

Michael Shermer has written over 200 columns for Scientific American magazine. Two of his TED talks are listed in the top 100 TED talks of all time. As founder of The Skeptics Society and editor-in-chief of its magazine, Skeptic, he is certainly an inveterate critical thinker.

Shermer helped create a fascinating and enlightening infographic that covers the ‘who, what, where, and why’ of conspiracy theories like no other publication IMO. (Link to the colorful and ‘fun’ infographic pdf below.)

Following is just one section of the publication, but the whole thing is an illuminating must-read, IMO. (One section, “Testing 9/11 Conspiracy Claims” brings critical thinking tools to bear on that popular conspiracy theory.)

Top Ten Ways to Test Conspiracies

by Michael Shermer and Pat Linse

Some conspiracy theories are true, some false. How can one tell the difference? The more the conspiracy theory manifests the following characteristics, the less likely it is to be true.

- Proof of the conspiracy supposedly emerges from a pattern of “connecting the dots” between events that need not be causally connected. When no evidence supports these connections except the allegation of the conspiracy, or when the evidence fits equally well to other causal connections—or to randomness—the conspiracy theory is likely false.

- The agents behind the pattern of the conspiracy would need nearly superhuman power to pull it off. Most of the time in most circumstances, people are not nearly so powerful as we think they are.

- The conspiracy is complex and its successful completion demands a large number of elements.

- The conspiracy involves large numbers of people who would all need to keep silent about their secrets.

- The conspiracy encompasses some grandiose ambition for control over a nation, economy or political system. If it suggests world domination, it’s probably false.

- The conspiracy theory ratchets up from small events that might be true to much larger events that have much lower probabilities of being true.

- The conspiracy theory assigns portentous and sinister meanings to what are most likely random and insignificant events.

- The theory tends to commingle facts and speculations without distinguishing between the two and without assigning degrees of probability or of factuality.

- The theorist is extremely and indiscriminately suspicious of any and all government agencies or private organizations.

- The conspiracy theorist refuses to consider alternative explanations, rejecting all disconfirming evidence for his theory and blatantly seeking only confirmatory evidence. – Michael Shermer and Pat Linse

Here’s the link to the well-done, colorful infographic:

Conspiracy Theories: Who Believes Them and Why,

and How to Determine if a Conspiracy Theory is True or False

To summarize the best way to deal with conspiracy theories and their ‘theorists,’ perhaps we need to look no further than the oft-quoted phrase, “Extraordinary claims require extraordinary evidence.”